Why recompute the tokens?

To compute relations such as Mutual Information or Keyness we need an estimate of the total number of running words (let's call it TNR) in the text corpus from which the data came. It is tricky to decide what actually counts as the TNR. Not only are there problems to do with hyphenation, apostrophes and other non-letters in the middle of a word, numbers, words cut out because of a stoplist etc, but also a decision whether TNR should in principle include all of those or in principle include only the words or clusters now in the list in question. In practice for single-word word lists this usually makes little difference. In the case of word clusters, however, there might be a big difference between the TNR words and TNR clusters, and anyway what exactly is meant by running clusters of words if you think about how they are computed?

For most normal purposes, the total number of running words (tokens) computed when the word list or index was created will be used for these statistical calculations.

How to do it

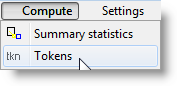

Compute | Tokens

What it affects

Any decision made here will apply equally both to the node and the collocate whether these are clusters or single words, or to the little word-list and the reference corpus word-list in the case of key words calculations.

If you do choose to recompute the token count, then the TNR will be calculated as the total of the word or cluster frequencies for those entries still left in the list. After any have been zapped or if a minimum frequency above 1 is used the difference may be quite large.

If you choose not to recompute, the total number of running words (tokens) computed when the word list or index was created will be used.